Most AI companies ship features. Anthropic ships principles. That distinction shapes everything about how Claude looks, feels, and behaves. Since the company launched Claude Design on April 17, 2026, the team has pushed a bold thesis: that safety constraints don't limit creativity, they fuel it. The design work happening at Anthropic's labs tells a bigger story about where AI interfaces are headed. It's a story about restraint, intention, and the quiet power of saying less. If you're building products that talk to customers, or just curious about how the world's most cautious AI lab thinks about user experience, the design philosophy behind Claude has lessons worth stealing.

The Anthropic Design Philosophy: Safety as a Creative Constraint

Anthropic doesn't treat safety as a checkbox. It's the starting point for every design decision. That sounds abstract until you see how it plays out in the product. Every interaction Claude has with a user runs through a framework where honesty, helpfulness, and harm avoidance compete for priority. The design team's job is making that invisible.

Constitutional AI and the UI of Alignment

Constitutional AI is Anthropic's approach to training models using a written set of principles. Think of it as a rulebook the model internalizes. The design challenge? Surfacing those principles without making the user feel policed. Claude's interface doesn't flash warning labels or lecture you. Instead, the guardrails show up as tone shifts, gentle redirections, and transparent refusals. You'll notice Claude says "I'm not confident about that" instead of just guessing. That's not a bug. It's a design decision rooted in the constitutional framework. The UI reflects the values baked into the model itself.

Balancing Utility with Ethical Guardrails

There's a real tension between being useful and being safe. Too many guardrails and the tool feels broken. Too few and you've got a liability. Anthropic's design team walks this line by studying where users actually hit friction. They review chat transcripts to find moments where Claude's caution frustrated someone trying to do legitimate work. That data feeds back into both model training and interface updates. The result is a product that feels responsive without being reckless. If you run a business that automates customer conversations, this balance matters to you too. Platforms like Wexio, which uses Claude alongside GPT-4 for AI-powered auto-replies, face the same tradeoff every day: helpful enough to close tickets, careful enough to protect your brand.

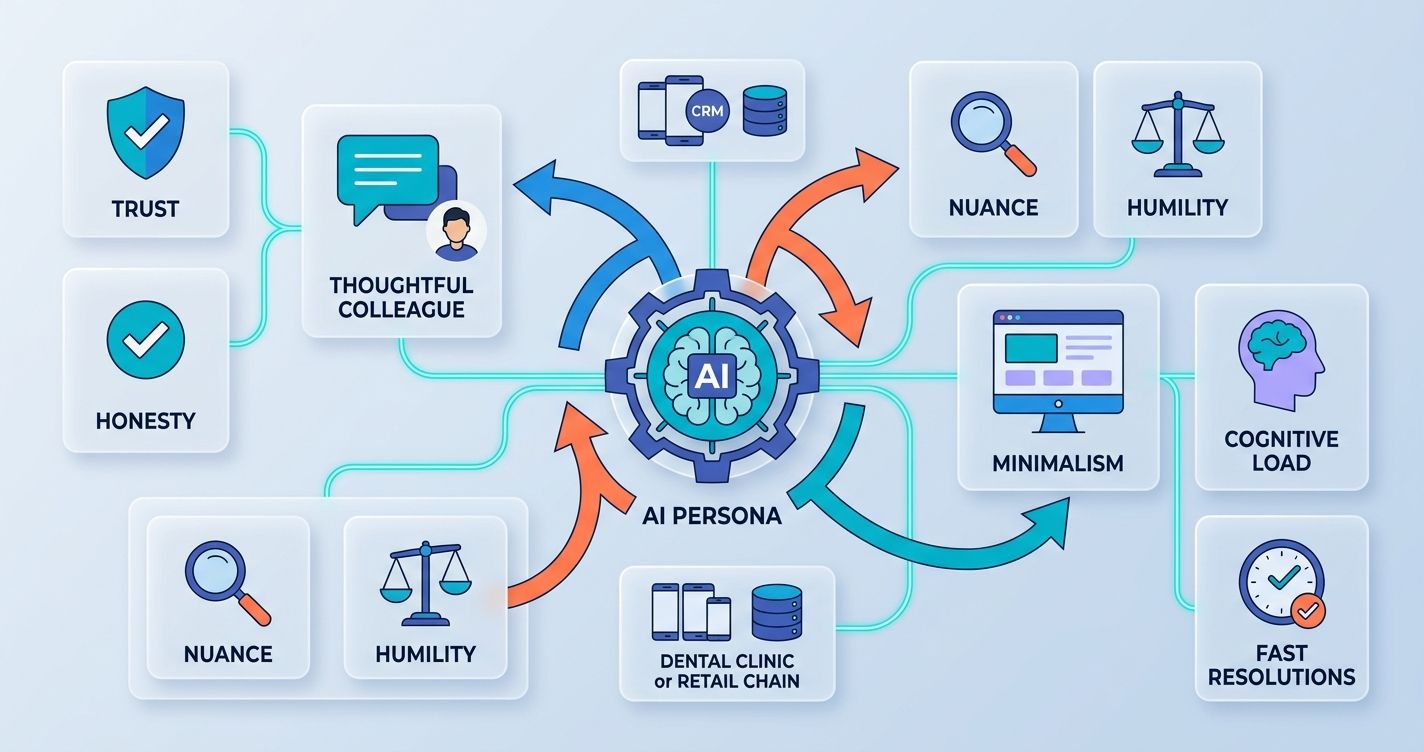

Crafting the Persona: The Voice and Tone of Claude

Claude doesn't sound like Siri. It doesn't sound like a search engine. It sounds like a thoughtful colleague who happens to know a lot. That persona didn't happen by accident.

Designing for Nuance and Intellectual Humility

Most chatbots default to confidence. Claude defaults to honesty. The design team built a voice that admits uncertainty, qualifies claims, and invites follow-up questions. This isn't just a personality quirk. It's a strategic choice that builds trust over time. Users who interact with Claude regularly report feeling like the tool respects their intelligence. That's the goal. The tone avoids both the robotic flatness of older assistants and the performative enthusiasm of newer ones. It sits in a middle register: warm, direct, and grounded.

The Role of Minimalism in Reducing User Cognitive Load

Anthropic's interface strips away clutter. There's no sidebar full of suggested prompts. No animated mascot. No gamification. The design team believes that reducing cognitive load lets users focus on what they're actually trying to do. This minimalism extends to Claude's responses, which tend to be structured but not bloated. Paragraphs stay short. Lists appear only when they help. The design language says: your time matters, and we won't waste it. That principle applies whether you're building an AI chatbot for a dental clinic or a retail chain. Fewer distractions mean faster resolutions.

Interface Innovation: Beyond the Traditional Chatbot Box

The standard chat window is a rectangle where text goes in and text comes out. Anthropic's design work at the labs pushes well past that model.

Artifacts: Redefining Collaborative Workspaces

Artifacts changed how people use Claude. Instead of just generating text in a chat thread, Claude can produce standalone documents, code snippets, and visual outputs in a separate panel. You can edit them, iterate on them, and export them. This turns a conversation into a workspace. The design insight here is subtle but powerful: people don't just want answers, they want drafts they can work with. Artifacts treat AI output as a starting point, not a finished product. That collaborative framing reduces the pressure on the model to be perfect and gives users real agency.

Visual Language and Brand Identity at Anthropic

Anthropic's visual identity is deliberately understated. Earth tones. Clean typography. Generous white space. The brand doesn't scream "tech startup." It whispers "research lab." That's intentional. The design team wants users to associate Claude with rigor, not hype. Every color choice, icon, and animation speed reinforces that message. Even the loading indicators are calm. Compare this to the flashy, feature-packed interfaces of other AI tools, and you'll see a clear philosophical split. Anthropic bets that trust comes from restraint, not spectacle.

The Human-Centric Engineering Process

Good design at Anthropic isn't just about pixels. It's about process.

Iterative Prototyping with Large Language Models

Anthropic's design team prototypes with the model itself. They'll test a new interaction pattern by running hundreds of conversations and analyzing where users get confused or drop off. This isn't traditional A/B testing. It's closer to live improv with a language model, adjusting prompts, system messages, and UI elements in real time. The feedback loop is tight: build, test, review transcripts, adjust. That cycle repeats weekly. If you're running automated customer flows on any platform, this same approach works. Review your chat logs regularly. They're free user research, and they'll show you exactly where your logic breaks down.

User Research in the Era of Generative AI

Traditional user research assumes you know what the product does. With generative AI, the product does something different every time. That makes standard usability testing tricky. Anthropic's researchers use a mix of diary studies, prompt analysis, and session recordings to understand how real people interact with Claude over weeks, not minutes. They track median task completion times rather than just averages, since outliers can distort the picture. This longitudinal approach reveals patterns that one-off tests miss. It's how the team discovered that users who receive shorter initial responses engage more deeply over time.

Future Frontiers: Designing for Agentic AI and Autonomy

Claude is moving toward agentic capabilities, meaning it can take actions, not just generate text. It can browse the web, write and run code, and operate computer interfaces. This shift creates massive design questions. How do you show a user what an AI agent is doing in the background? How do you build trust when the model is making decisions autonomously? Anthropic's approach leans on transparency: visible action logs, confirmation steps, and clear boundaries on what the agent can and can't do. The design challenge here mirrors what businesses face when automating customer journeys. You need your customers to feel informed, not surveilled. Platforms like Wexio handle this with features like conditional branching and explicit bot-to-human handoff triggers, such as negative sentiment detection or repeated failed responses. As AI agents become more capable, expect Anthropic's design team to keep pushing for interfaces that make autonomy feel safe rather than opaque.

The design philosophy behind Claude at Anthropic's labs isn't just about making a pretty chatbot. It's a blueprint for building AI products that people actually trust. Safety as a creative constraint, honesty as a default tone, minimalism as a respect for user attention: these principles apply whether you're a research lab or a small business automating WhatsApp replies. The companies that internalize these ideas will build better customer experiences, period.

If you're ready to put some of these principles into practice across WhatsApp, Telegram, Instagram, and Viber, Wexio gives you a unified inbox and no-code AI flows with 12+ industry-specific templates out of the box. Get started free and see what thoughtful automation looks like.