A few years ago, "AI" meant a chatbot that could answer FAQs. You'd type a question, get a canned response, and move on. That era is ending fast. A new breed of software is emerging: AI agents that don't just respond but actually do things on your behalf. They book appointments, write code, triage support tickets, and coordinate with other agents to finish complex tasks. If you've been wondering what AI agents really are and how they differ from the chatbots you already know, you're in the right place. Understanding this shift matters because it's already reshaping how businesses interact with customers, manage operations, and compete. The AI agents market is projected to reach USD 182.97 billion by 2033, growing at a staggering 49.6% CAGR. That's not a niche trend. It's a tidal wave. Whether you run a salon, a car dealership, or a SaaS company, grasping the definition and architecture of AI agents will help you make smarter decisions about what to adopt and when.

The Evolution from Chatbots to AI Agents

Chatbots were the first wave. They followed scripts. You'd map out decision trees, anticipate user inputs, and pray nobody asked something unexpected. When they did, the bot broke. It apologized and routed you to a human.

AI agents represent a fundamentally different approach. Instead of following a fixed script, they perceive their environment, reason about goals, and take independent action. They can call APIs, query databases, send messages, and loop back to reassess whether their actions worked. The difference isn't just technical; it's philosophical. A chatbot is a vending machine. An AI agent is closer to a junior employee who can think on their feet.

This evolution didn't happen overnight. It took years of progress in natural language processing, reinforcement learning, and tool-use frameworks. But the tipping point arrived when large language models gained the ability to plan multi-step tasks and use external tools. That's when "chatbot" stopped being an adequate label.

Defining Autonomy and Agency

Autonomy is the core concept here. An AI agent operates with a degree of independence. You give it a goal, not a step-by-step script. It figures out the "how" on its own.

Agency means the system can act in the world. It doesn't just generate text; it triggers real outcomes. It sends an email. It updates a CRM record. It escalates a conversation to a human when sentiment turns negative. These aren't hypothetical capabilities. Roughly 57% of companies already have AI agents in production, handling real workflows right now.

True agency also implies a feedback loop. The agent observes the result of its action and adjusts. If a customer doesn't respond to a WhatsApp message, the agent might try Telegram next. That kind of adaptive behavior separates agents from static automation.

Key Differences Between LLMs and Agentic Systems

A large language model like GPT-4 or Claude is a brain without a body. It can reason, summarize, and generate text. But on its own, it can't do anything in the real world. It has no hands.

An agentic system wraps that brain in a loop of perception, reasoning, and action. It gives the LLM access to tools: search engines, calculators, APIs, databases. The LLM decides which tool to call, interprets the result, and decides what to do next. Think of the LLM as the engine and the agentic framework as the car. The engine is powerful, but it's useless without wheels, a steering system, and brakes.

This distinction matters for business buyers. Plugging an LLM into your website gives you a smarter chatbot. Building an agentic system gives you a digital worker.

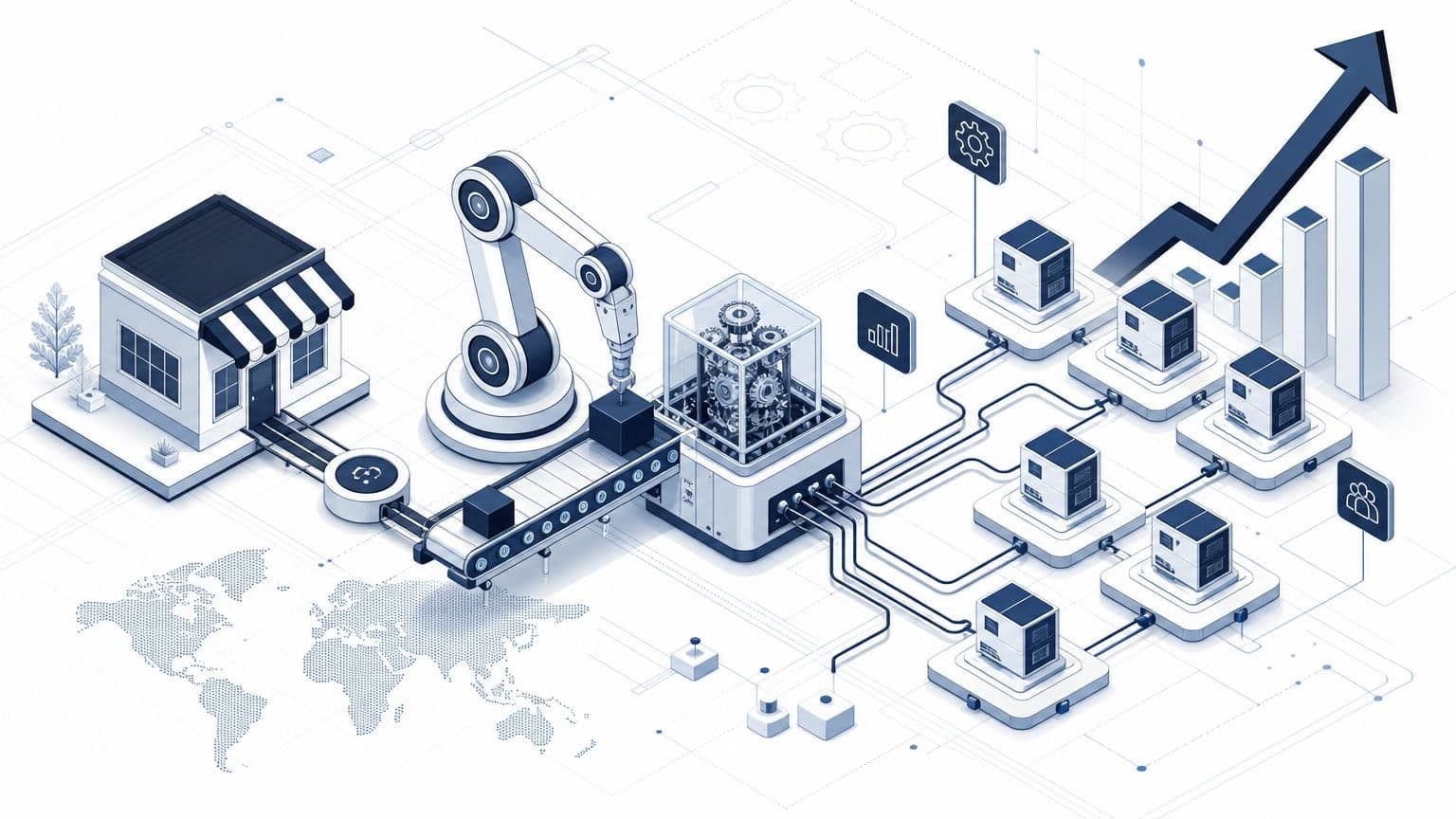

Core Components of an AI Agent Architecture

Every AI agent, regardless of its use case, shares a common anatomy. Understanding these components helps you evaluate platforms and spot marketing fluff. If a vendor calls something an "AI agent" but it's missing one of these layers, you're probably looking at a dressed-up chatbot.

The architecture breaks down into three layers: perception, reasoning, and action. Each layer handles a distinct job, and the magic happens when they work together in a continuous loop.

Perception and Sensory Input

An agent needs to know what's happening around it. For a physical robot, that means cameras and microphones. For a software agent, "perception" means ingesting data from its environment: incoming messages, API responses, database queries, webhook events.

Consider a customer service agent handling inquiries across WhatsApp, Instagram, and Telegram. Its perception layer pulls in every new message, detects the language, identifies the customer from a CRM lookup, and reads the conversation history. All of this happens before the reasoning engine even fires. The richer the perception, the better the agent's decisions.

Platforms like Wexio handle this perception layer through a unified omnichannel inbox. Instead of building separate integrations for each messaging channel, the agent receives a standardized input regardless of whether the customer wrote on Viber or Instagram. That consistency matters because it reduces the chance of errors in downstream reasoning.

Brain and Reasoning Engines

This is where the LLM lives. The reasoning engine takes the perceived input and decides what to do. It might classify the customer's intent, draft a reply, or determine that it needs more information before acting.

Modern reasoning engines use techniques like chain-of-thought prompting, where the model "thinks out loud" through a problem step by step. Some agents use multiple LLM calls in sequence: one to classify, another to plan, a third to generate output. This layered approach improves accuracy but adds latency and cost.

The reasoning engine also manages memory. Short-term memory holds the current conversation. Long-term memory stores customer preferences, past interactions, and learned patterns. Without memory, every conversation starts from zero, which is exactly the frustrating experience most chatbots deliver.

Action Modules and Tool Integration

The action layer is what makes an agent an agent. After reasoning, it acts. It might send a message, create a calendar event, update a spreadsheet, trigger a payment, or call another agent for help.

Tool integration is the practical bottleneck for most teams. Your agent is only as useful as the tools it can access. A no-code visual flow builder, like the one Wexio offers with AI cards and conditional branching, lets non-technical teams connect these tools without writing code. That's a big deal for small and mid-size businesses that don't have dedicated engineering teams.

Security at this layer is critical too. When an agent can take real-world actions, a mistake isn't just an awkward response; it's a wrong charge, a leaked document, or a missed appointment. Enterprise-grade encryption (AES-256, TLS 1.3) and GDPR compliance aren't optional features. They're table stakes.

How AI Agents Function: The OODA Loop

Military strategist John Boyd developed the OODA loop: Observe, Orient, Decide, Act. AI agents follow a strikingly similar cycle.

Step one: the agent observes its environment. A new message arrives. A sensor reading changes. A scheduled trigger fires. Step two: it orients by placing that observation in context. Who is this customer? What's their history? What's the current goal? Step three: it decides on a course of action using its reasoning engine. Step four: it acts, executing the chosen response through its tool integrations.

Then the loop repeats. The agent observes the result of its action and starts again. Did the customer confirm the appointment? Great, close the loop. Did they ask a follow-up question? Back to orientation.

This continuous cycle is what gives agents their "alive" quality. They don't just respond once and stop. They persist, adapt, and iterate. The real-time decision-making AI agents market is predicted to reach approximately USD 215.01 billion by 2035, which tells you how much value businesses see in this always-on, always-adapting behavior.

Speed matters here. A slow OODA loop means a sluggish agent. If your agent takes 15 seconds to respond on WhatsApp, the customer has already switched tabs. Latency in the reasoning step is usually the bottleneck, which is why choosing the right LLM and optimizing prompt chains is so important.

Classification of AI Agent Types

Not all agents are built the same. The field borrows classification schemes from decades of AI research, and knowing the types helps you pick the right approach for your problem.

Simple Reflex vs. Goal-Based Agents

Simple reflex agents follow condition-action rules. If the customer says "cancel," route to the cancellation flow. If the message contains a tracking number, fetch the order status. These agents are fast and predictable, but they break when situations fall outside their rules.

Goal-based agents are more flexible. You give them an objective: "resolve this support ticket" or "qualify this lead." They plan a sequence of steps to reach that goal, adjusting as new information comes in. They're slower and more expensive to run, but they handle ambiguity far better.

Most real-world deployments use a hybrid. Simple reflex logic handles the predictable stuff (greetings, FAQs, routing). Goal-based reasoning kicks in for complex scenarios (negotiating a return, troubleshooting a technical issue). This hybrid approach keeps costs down while still handling edge cases gracefully.

Multi-Agent Systems (MAS) and Collaboration

A single agent has limits. Multi-agent systems solve this by letting specialized agents collaborate. One agent handles intake and classification. Another handles billing questions. A third manages appointment scheduling. They pass context between each other like colleagues handing off a case file.

Multi-agent systems have experienced a growth of 327% in less than four months, which signals how quickly the industry is moving toward this model. The appeal is clear: smaller, specialized agents are easier to test, debug, and improve than one monolithic agent trying to do everything.

Coordination is the hard part. Agents need a shared communication protocol and a way to resolve conflicts. If the billing agent and the scheduling agent both try to message the customer simultaneously, you've got chaos. Thoughtful orchestration design prevents this.

Real-World Applications and Use Cases

Theory is nice. Practical examples are better. Here's where AI agents are already delivering measurable results.

Autonomous Software Development

Coding agents like Devin and GitHub Copilot Workspace can take a bug report, read the codebase, write a fix, run tests, and submit a pull request. They're not replacing senior developers, but they're handling the tedious stuff: boilerplate code, test generation, documentation updates.

For software teams, this means faster iteration cycles. A coding agent can work through a backlog of minor issues overnight. The human developer reviews the output in the morning. It's a force multiplier, not a replacement.

Dharamveer Prasad, an Application Security Engineer at Cybersmithsecure, put it well: "An agentic AI solution should not only generate insights but also help teams take action faster without adding complexity to their workflow." That principle applies beyond software development to any business process.

Personal Productivity and Virtual Assistants

On the consumer side, AI agents are becoming personal chief-of-staff tools. They manage calendars, summarize emails, draft responses, and coordinate schedules across multiple people. Apple's Siri, Google's Gemini, and Microsoft's Copilot are all racing toward this vision.

For businesses, the equivalent is a customer-facing agent that handles the full lifecycle: greeting, qualification, scheduling, follow-up, and feedback collection. A beauty salon could deploy an agent that books appointments on WhatsApp, sends reminders on Telegram, and collects reviews on Instagram, all from a single platform. Wexio offers 12+ industry-specific automation templates out of the box for exactly these kinds of workflows, so you're not building from scratch.

The US AI agents market alone is projected to reach $69 billion by 2034, and personal productivity is one of the fastest-growing segments driving that number.

Challenges and the Future of Agentic AI

The hype is real, but so are the problems. Deploying AI agents in production isn't plug-and-play. Here's what keeps practitioners up at night.

Reliability and Hallucination Risks

LLMs hallucinate. They confidently state things that aren't true. When a chatbot hallucinates, it's embarrassing. When an agent hallucinates and then acts on that hallucination, it's dangerous. Imagine an agent telling a customer their refund has been processed when it hasn't. That chips away at trust fast.

Mitigation strategies include grounding the agent's responses in retrieved documents (retrieval-augmented generation), adding validation steps before high-stakes actions, and building clear escalation triggers. If the agent's confidence score drops below a threshold, or if it detects negative sentiment, it should route to a human operator immediately. Reviewing chat transcripts regularly is free user research that helps you spot these failure modes before customers do.

Reliability also depends on the tools the agent uses. If an API returns bad data, the agent's reasoning is compromised from the start. Monitoring and observability aren't glamorous, but they're essential.

Ethical Considerations and Security

When an agent can take actions autonomously, accountability gets murky. Who's responsible when an agent makes a bad decision? The developer? The business owner? The LLM provider? These questions don't have clean answers yet.

Data privacy is another minefield. Agents process sensitive customer information: names, payment details, health records. They need to operate within strict compliance frameworks. GDPR, HIPAA, and industry-specific regulations all apply. EU-hosted infrastructure and SOC 2-ready security postures aren't nice-to-haves; they're requirements for any serious deployment.

Bias is a subtler risk. If your training data skews toward certain demographics, your agent's behavior will too. Regular audits of agent decisions, especially in sensitive domains like finance and healthcare, are non-negotiable.

The future is bright but demands caution. As agents become more capable, the guardrails need to grow with them. The companies that get this balance right will build enormous competitive advantages. The ones that don't will make headlines for the wrong reasons.

What This Means for Your Business

AI agents aren't science fiction. They're here, they're multiplying, and they're getting better every quarter. The core definition is straightforward: an AI agent is software that perceives its environment, reasons about goals, and takes autonomous action. What makes it powerful is the loop: observe, orient, decide, act, repeat.

For small and mid-size businesses, the opportunity is to automate repetitive customer interactions without sacrificing quality. The right agent handles the predictable 80% of conversations while routing the tricky 20% to your team. That's not about replacing humans. It's about freeing them to do work that actually requires a human touch.

If you're ready to put AI agents to work across WhatsApp, Telegram, Instagram, and Viber without writing a single line of code, Wexio's platform gives you the tools to start today. The free tier includes 100 operations per month with no credit card required. Get started here and see what agentic automation looks like in practice.

Sources

- Grand View Research: AI Agents Market Report

- DemandSage: AI Agents Market Size

- Databricks: State of AI Agents

- Joget: AI Agent Adoption in 2026

- Precedence Research: Real-Time Decision-Making AI Agents Market

- Juma AI: AI Agents Examples